If you took economics in college, you probably heard about the Laffer Curve, which illustrates the point where the optimum tax rate will maximize tax revenue. The same concept holds true for today’s websites and apps, when you consider your desire to customize content for visitors, ensure the appropriate media performance and generate revenue.

So, let’s consider this theory as it applies to your website. You know you have to compress your images so your site loads quickly. But if you compress them too much, the images won’t look good.

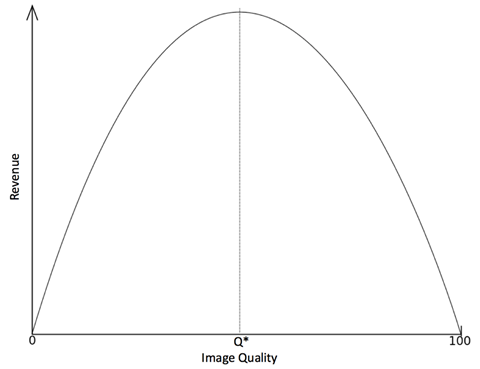

As a result, many times you have to guess what size image to use to ensure that the site loading won’t lag too much or that the images won’t appear poor quality, which both turn off visitors and hamper your site’s ability to maximize revenue. The Laffer Curve equivalent for website images is illustrated below.

It can be difficult to determine the optimal image quality – represented by Q* in the chart above – because there are a number of variables impacting how you manage images on your site. So, if you know your audience prefers a fast-loading website, you’ll opt to compress images as much as possible without severely impacting the quality.

But if your audience has high standards and you don’t want to risk bad perceptions about your brand, you may then opt for minimal compression, seeking to avoid even the most minor, barely noticeable image artifacts.

Even though we can use A/B testing and advanced analytics with micro-segments down to the 1:1 level to delivered personalized experiences, we ultimately end up guessing what people want. And that guesswork is potentially leaving a lot of money on the table.

For example, ecommerce giant Amazon noted that a page load slowdown of only 1 second would cost it $1.6 billion in sales revenue. Similarly, Google noted that a slowdown of just four tenths of a second in its search results would result in a loss of 8 million searches per day, which translate into millions less in online advertising.

The relationship between site performance and revenue/conversion is a highly studied one and the evidence is overwhelming that performance has an outsized effect on your key performance indicators (KPIs). This potential A/B test uniquely doesn’t start with a hypothesis, but an established theory. So why is it rarely, if ever, tested? My theory: the “content dilemma.”

The Content Dilemma

The content dilemma is a stubborn bottleneck that has plagued marketers, creative teams, product managers and developers alike. Even though you’d like to use content in unique ways for each web visitor, you can’t because you don’t have the capacity to create such a vast quantity of individualized content.

If you’ve tried to personalize your site, run a nurture campaign, or deal with segment-based display advertising, you’ve no doubt experienced this limitation. As a result, you don’t have the capacity to test the impact of image quality for these personalized variations, since it is time consuming and resource intensive.

But you may be a bit closer to solving this dilemma than you think. New tools available today, like Cloudinary, can help you create dynamic content programmatically and on the fly, eliminating this creative bottleneck, and providing vital assistance when executing A/B testing to ensure that you’re maximizing revenue.

To determine the optimal image resolution to maximize bandwidth, three ingredients are needed:

1. A way to conduct A/B/N tests

These can be internal systems or a third-party tools, like Maxymiser, Optimizely, Adobe Target or SiteSpect. For technical reasons, a server side solution or a SiteSpect account is recommended, since manipulating image URLs with JavaScript might introduce its own performance issues.

2. A reporting mechanism

To get maximum value out of an A/B/N test, it is necessary to set up as many segments as possible, since these segment will help better define results. For instance, you can compare mobile vs. desktop, since there’s typically a huge variability in connection speed and browser performance. There are also distinctions between rural and urban, different browsers, new and returning customers, with hundreds of other unique factors coming into play.

Maxymiser is a really great tool for doing this because it will identify your important segments are without requiring you to set them all up ahead of time. Passing the visitor experience as a variable to your site analytics tool (Adobe Analytics, Coremetrics, WebTrends, to name a few) will work great as well, enabling you to closely examine how exactly behavior is affected by image size/quality.

3. Images

You will want to start with four different quality levels (A/B/C/D test), which will require you to take the number of images on your site and multiply by four. Don’t ask your creative team to do this, they will never talk to you again.

Here’s where a tool like Cloudinary is valuable because it enables you to quickly create various images simply by changing a parameter in the image URL. The ability to make these image changes on the fly will not only help you execute the test, but also maintain the winning experiences going forward.

As you can see, there are many variables that can impact how you manage website images – from resolution and image size to bandwidth usage. And to manage the three ingredients listed above, there are several tools available to take the guesswork out of the testing process. These tools will help you overcome the content dilemma and manage images in a way that optimizes your site’s performance, enabling greater personalization and maximizing revenue generation.

Related Topics

Top